Introduction

Today, as young global citizens, children everywhere have to learn English. Traditional methods are often expensive, unmotivating and in many instances ineffective (British Council, 2018). Teaching and learning English is a global industry currently valued at over $31 billion, with the digital sector representing 10 per cent of the whole, growing annually at around 6 per cent (Ambient Insight, 2015). The market is currently dominated by significant ‘traditional’ players (classroom/offline). Meanwhile, employers complain of the insufficient level of English skills among prospective employees, alongside the recognition that English is increasingly important for business and employment prospects. Demographic, cultural and economic trends suggest that a transformation of the size, shape and composition of the market is on the horizon, and that technology and consumer trends will shape the way English is learned, taught and assessed. Specifically, these trends suggest an increasing demand for personalized and purposeful ways to learn, with a focus on effective communication (British Council, 2018). Indeed, technology is offering solutions to the common challenges of the market, namely accessibility, affordability, scalability and efficacy. English is recognized as a critical skill, not least because it has been shown that mastering English leads to increased income potential, better life opportunities and an improved standard of living for individuals. Higher national standards of English impact on overall GDP within a country (McCormick, 2013).

It is within this context that Little Bridge, an online learning resource for 6–12 year olds, was created, aiming to:

ensure that no child is excluded from the global conversation (where English is the language of the internet)

support teachers and parents who are looking for solutions that address the global demand for English

foster global citizenship by encouraging the celebration of differences along with the recognition of what children have in common.

Little Bridge’s platform is based on the premise that both a social context and a real-world context are vital for effective language acquisition. Learning technologies can offer the opportunity for students to access the wider world outside their classroom (Dhanya, 2016). Little Bridge targets pupils aged 6–12, who engage with the platform at home or at school (Hartshome et al., 2018). The platform has been designed to address many of the challenges facing young learners, their families and their teachers, such as fostering connections between home and school learning (Linse et al., 2014). The online community that Little Bridge offers allows young learners to practise real-world conversations with their peers, rather than memorizing lists of vocabulary or verb conjugations (Isbell, 2018). In addition, online, games-based learning in primary education has been shown to be most effective in improving pupil skills when pupils use the technology at home and it is reinforced during formal learning at school (Bakker et al., 2015). Research has also demonstrated that students who engage in online communities for language learning are also more engaged in language learning activities (Akbari et al., 2016), which is also something that Little Bridge wanted to examine.

The world of Little Bridge

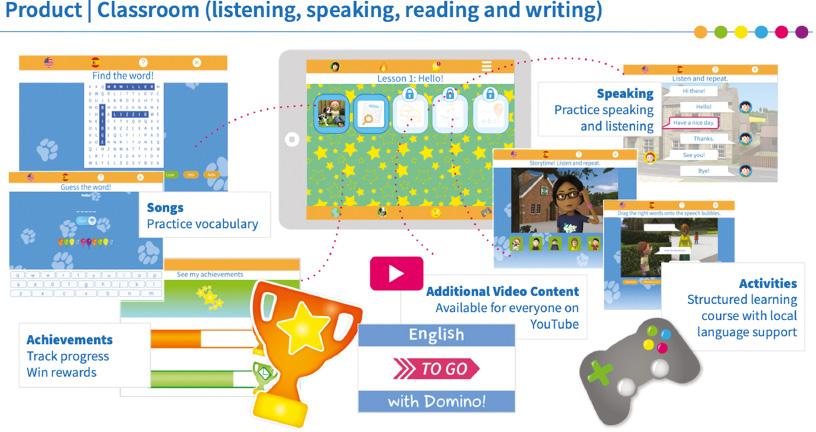

Little Bridge is an online English language learning platform that, since 2012, has served over ten thousand schools and a hundred and fifty thousand families in over a hundred countries. It offers a safe, online environment in which children learn English (vocabulary, grammar structures and communication) while interacting with practice activities in the platform and messaging their peers learning English from around the world, through the medium of English. The objective of Little Bridge is to improve the level of English language learning competencies of primary-aged children for whom English is not their first or native language and, with global citizenship in mind, to broaden access to this important global communication skill, alongside key attributes of curiosity and empathy. The platform and content have been created by a small team of ex-teachers, children’s content creators (with a background in award-winning children’s publications) and experienced engineers. The initial focus was on designing for engagement, using immersion and gamification techniques and iterative design methodology, with a clear user data feedback loop. The pedagogy that underpins Little Bridge ensures that the key language learning skills of listening, speaking, reading and writing are covered, and that the content aligns with recognized international standards for English language learning and curriculum.

When a child enters the Little Bridge platform, using unique login credentials, he or she is immediately immersed in an English-speaking world (see Figure 1). Very quickly, the characters and the places in the little English town of Little Bridge become familiar. English language acquisition occurs as stories about these characters unfold. The use and efficacy of stories in both first and second or foreign language learning is well documented (Lucarevschi, 2016). Diverse ethnicities and differing familial contexts are presented so that the learner at once feels comfortable, yet curious. Competence in English is grounded in children’s engagement with, and understanding of, these characters and stories as well as the wide variety of structured learning activities that the platform provides. All learning activities are consciously designed to build familiarity with new vocabulary and language structures. Children experience both receptive learning, in which they passively watch or listen as something is being demonstrated or explained, and, more importantly, productive learning, in which they actively apply the concepts they have just learned to produce language within a collaborative, age-appropriate environment, where there are clear examples of self- and peer-prompted ‘corrections’ and ‘improvements’ in the messages posted. English comprehension is aided by the abundance of visual cues. Because everything the user hears and reads is contextualized in a world in which children are soon at ease, and since language learning structures are introduced systematically to help build confidence, children rapidly become actively engaged in regular exchanges with other learners in the community to produce ‘real’ and purposeful language (Krashen, 1982).

The structured learning activities use gamification techniques, including a system of rewards that is linked to the individual student’s personal profile. When combined with the student’s desire to engage in the Little Bridge community, these rewards generate motivation so that students redo the activities in which they were unsuccessful, thus learning from their own mistakes. Core vocabulary and English language principles are repeated and reinforced, which both imitates conversational English speech and encourages mastery for learners.

Creating an individual profile and participating in a social community of their peers is key. Children produce language through a desire to communicate with others in the community. As teachers and parents globally have commented, it provides children with ‘broader horizons’ or a ‘window on the world’. All users of the platform can join a safe, fully moderated environment in Little Bridge to practise the English they are learning and at the same time build a circle of international friends. The act of participating in real-world conversations with children in other countries gives purpose and relevance to the experience of learning English, which can otherwise seem abstract and unreal (Bialystok, 1991; Golonka et al., 2014). Figure 2 shows how each learner can customize his or her user profile, which includes an avatar and a personal page, along with the available learning activities on the platform.

Developing research questions and methodology

Little Bridge joined the UCL EDUCATE programme (Cukurova et al., 2019) in the third cohort of companies, which started at the end of 2017. At the time they joined the EDUCATE programme the company had conducted some research with partners in various countries, including a large-scale study by the University of Chile in Santiago and smaller studies by the University of Saint Petersburg in Russia and the Future Schools organization in China. These studies have not been published, but they served to demonstrate to stakeholders (including ministries of education) the enjoyment and motivation to learn created by the Little Bridge platform, as well as the significant increase in achievement in the four key English language skills (reading, writing, speaking and listening), when compared to peers who had not used Little Bridge. They also helped the Little Bridge team develop additional hypotheses about the influence of the social community in Little Bridge. Indeed, since the Little Bridge platform had been in use for over five years at this point, the team had begun to develop their own hypotheses based on observed usage patterns and customer feedback they had received over time.

As part of EDUCATE, the Little Bridge team attended research training, which taught them the fundamentals of designing and conducting a research study. Companies participating in EDUCATE were also individually matched with research mentors, who worked with the companies one on one to support them in their research work. The EDUCATE mentors were all experienced academic researchers in the field of education technology, but many also had experience working in the private sector. This membership in multiple communities of practice allowed EDUCATE mentors to act as brokers, bridging the boundaries of academia and business (Akkerman and Bakker, 2011; Wenger, 1998). Mentors with this experience were able to help companies make meaning of academic artefacts and resolve any conflicts that arose between the academic and business communities (Star and Griesemer, 1989).

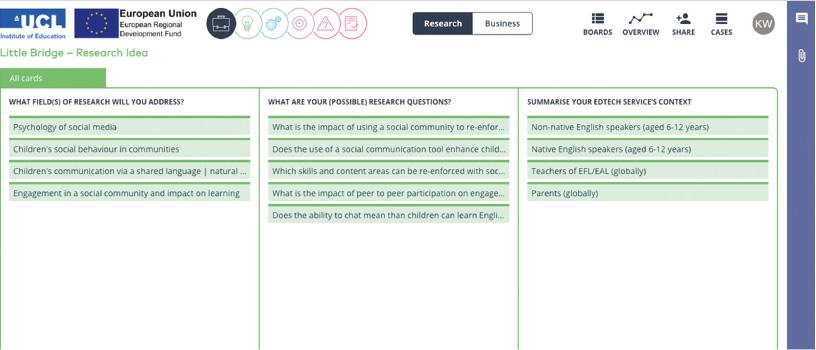

The Little Bridge team discussed a number of thesis questions with their mentor at their first EDUCATE research clinic session. In this clinic, the team and their EDUCATE mentor looked at Little Bridge’s Research Idea page on the UCL Lean platform (Figure 3) to get a sense of the research questions they wanted to answer. Little Bridge also came to the meeting with other research questions that they had recorded outside the Lean platform.

In discussions about the research questions depicted in Figure 3, as well as those recorded outside UCL Lean, the Little Bridge team converged on one central question regarding whether learners’ participation in the social community aspect of the platform (rather than just the assigned activities) was related to their language learning. Given that the social community was one of many features within the platform, this question more than any other seemed to represent what the team wanted to know about their product. The answer could inform the Little Bridge team as to how the social feature might be developed, where and how it should be positioned in the product, and the implications for the company’s future business/market strategy. Discussions with the EDUCATE mentor provided the framework for considered analysis of what was at the heart of Little Bridge’s mission and how research would be fully integrated into both the development and business functions, helping to bring clarity of purpose across the different teams and workstreams. Thus, as a result of these questions with their EDUCATE mentor, Little Bridge drafted the central research question to guide their research: What is the relationship between student participation in the social community and language learning outcomes?

Once this question was agreed upon, the conversation turned to the data available to answer this question and its related sub-questions. Little Bridge had an advantage over many small enterprises in educational technology (edtech) in that they had been collecting data from users and customers for years in different formats, which they had never previously used. Specifically, they had captured anecdotal evidence via video customer testimonials and they had access to anonymized learner data from the platform itself.

Little Bridge and their EDUCATE mentor looked first at conducting an additional review of the video data in light of the newly agreed main research question. To collect these videos, Little Bridge visited 12 countries (France, Spain, Greece, Russia, Turkey, Morocco, Panama, Colombia, Mexico, Chile, Korea and the United Kingdom) and spoke to teachers at schools and language centres, as well as parents and learners. Their convenience sample of 39 institutions was made up of schools or language centres that had used the Little Bridge platform for at least one year. In total, Little Bridge interviewed 63 teachers, 47 parents and 172 students whose parents had granted permission for the children to appear on camera for these interviews. Little Bridge had originally collected this information for use in marketing materials as testimonials or informal case studies, and had not considered it as a viable data set to address their research questions until they spoke with their EDUCATE mentor. As the team described these videos to their mentor, it came to light that during these video interviews, customers and users spoke, frequently at length, about various aspects of the Little Bridge platform, including their thoughts on the social community. The Little Bridge team and their mentor explored the benefits of performing a further, more systematic analysis of the video data. Little Bridge would watch all videos again and record any instances in which respondents provided feedback related to their research question. They would also conduct a thematic analysis of the video content (Braun and Clarke, 2006) to uncover any other themes emerging from the anecdotal video data that might be worth exploring in terms of product development or implementation. The findings from this first data analysis would also help inform the subsequent analysis of data collected from the platform itself.

Qualitative data analysis

As part of Little Bridge’s business development and customer support strategy, frequent visits are arranged to meet schools and observe users (teachers and students) in the different territories in which the platform has been adopted. On these visits, Little Bridge records video testimonials from customers, including head teachers, academic directors, teachers, parents and the learners themselves. In some video testimonials, participants respond to a set of questions written by Little Bridge. Other video testimonials are more narrative in structure as participants describe their experience with the platform, how and why they used it, and the overall value they believe the platform delivered. Participants were also encouraged to make criticisms or suggestions that might improve Little Bridge as a classroom tool and provide better opportunities for its use at home.

Until Little Bridge joined the EDUCATE programme, this footage had been edited mainly for marketing purposes. However, it was clear that certain themes were recurring in the feedback from multiple customers and end users. The guidance provided by the EDUCATE mentor on how to think about this analysis provided the company with a sharper research mindset, and recognizing the value of this qualitative feedback, beyond generalized ‘marketing messages’, encouraged the team to lay solid foundations for subsequent steps in the research. The videos were reviewed again by Little Bridge’s Head of Education, who noted any recurring themes and then grouped them into 18 categories (see Table 1).

The themes used for thematic analysis of the qualitative data

| Theme (in order of frequency discussed) | Content | Methodology | User behaviour | Impact |

|---|---|---|---|---|

| Enjoyment/engagement | X | X | X | |

| Building social profile (avatar and personal page) | X | X | X | |

| Messaging/friends around the world | X | X | X | |

| Global citizenship | X | X | ||

| Frequency of use | X | X | ||

| Speed of learning | X | |||

| Confidence building | X | |||

| Autonomy/independent learning | X | X | X | |

| Little Bridge as scaffold to learning | X | |||

| Efficacy of rewards system (gamification/socialization) | X | X | ||

| Value of characters/stories | X | X | ||

| Value of teacher tools | X | X | X | |

| Family involvement | X | X | ||

| Academic success | X | X | X | |

| Perceived value of learning English | X | |||

| Progress speaking English | X | X | X | |

| Student outcomes on international language assessment | X | X | X | |

| Home/school crossover | X | X |

Having identified these themes, the Head of Education acted as single coder for the videos; each time a theme was identified during a video, the time stamp and unique customer identifier were recorded. Each occurrence of the theme was also colour-coded according to whether the speaker was a teacher, parent or student. Finally, the themes were grouped into categories, according to whether each referred to the content of Little Bridge, the methodology of Little Bridge, an aspect of user behaviour or the impact of Little Bridge on language learning. This is represented in Table 1.

Aside from overall student enjoyment or engagement with Little Bridge, this analysis revealed that the themes most frequently described by users in the videos were those relating to the community-focused areas of the Little Bridge experience, such as:

students posting messages to the community

students spending time building their personal profile, including their avatar and personal page

students building a global ‘friend network’

students receiving rewards (through ‘gamification’) that were publicized in the community, thereby contributing to the student’s profile.

Furthermore, these themes were often linked to speakers’ comments regarding pupil progress in language learning, such as speed of learning (that is, the ease and rapidity with which students began to acquire language competencies in English), Little Bridge providing a clear scaffold to learning, and overall confidence building. The pattern emerging from these video testimonials was enlightening. It helped the company to focus on their ‘communication-led’ methodology. This validated the hypothesis that Little Bridge had about the importance of the social community to language learning, and it guided the development of the rest of the study.

Developing the main research proposal

The focus of Little Bridge’s next EDUCATE clinic session was to discuss the draft proposal for the research they hoped to conduct using their existing platform data. Their first phase of qualitative data analysis had begun to confirm their hypothesis that learners’ participation in the social community did have a relationship with their learning outcomes. However, the EDUCATE experience underlined that they needed to analyse more than the anecdotal video data in order for their argument to have any validity. Thus the topic of discussion with the research mentor became how to develop a mixed-methods study that also incorporated quantitative data analysis. These conversations continued to provide Little Bridge with clarity about the design of the research project and how it might contribute value to the business.

A first draft of Little Bridge’s research proposal had already been reviewed by the EDUCATE research mentor, so the session focused on comments made in the proposal template used in the EDUCATE programme. Little Bridge intended to study students (users) whom they labelled as ‘SuperPals’. Little Bridge define SuperPals as those learners who both actively participate in the social features of the site and also perform well on the learning activities. At the time of the meeting, even the notion of a SuperPal remained a hypothesis and ‘active participation’ and academic ‘performance’ were not fully defined. In other words, behaviours of some users had been observed to resemble a SuperPal, but this had not been measured in a systematic way. In order to clearly identify users who could be classified as SuperPals from the large data set, Little Bridge needed an objective set of criteria against which to measure all users. If a large enough sample of users could be identified as SuperPals, their behaviours on the platform could be observed and the group could become an influential source of data for decision-making at Little Bridge.

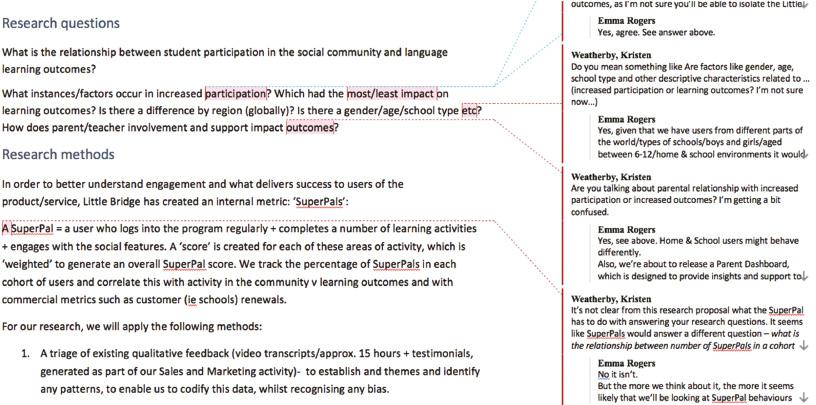

At this point, Little Bridge was at a crossroads in terms of their future product strategy. They needed to identify evidence that would guide the development of their product and platform, as well as their marketing and customer engagement. They hoped that an investigation of their SuperPal hypothesis might provide this evidence. Thus, the discussion in the EDUCATE clinic turned to revising Little Bridge’s main research question in order to make it inclusive of this new focus. The team and their mentor also looked at the two research sub-questions and discussed how to make them clearer. An excerpt of these comments in the research proposal is shown in Figure 4.

Little Bridge and EDUCATE mentor discussion of research questions in draft research proposal (source: author)

Once the research questions were revised, Little Bridge was to come up with a plan for analysing the data from their platform. Based on customer subscription renewal data, they had identified that schools which renewed their subscriptions were also more likely to have higher numbers of SuperPals, which suggested a further possible hypothesis, namely that the number of SuperPals in a school was related to customer retention. However, after discussion with their mentor, it was decided that the team would examine the anonymized data from the platform to better understand the behaviours and background characteristics of SuperPals. Ultimately, the final research questions driving this study thus became:

What is the relationship between student participation in the Little Bridge social community and language learning outcomes?

Do children who participate in the social community also complete more learning activities?

Do children who participate in the social community get higher scores in learning activities?

Does participation in the social community increase as children progress through the learning activities?

Does a child’s level (in terms of progress through the structured content) in the learning activities correlate to their use of the social community?

Defining the sample

At the start of the quantitative data analysis phase, the complete set of data on the Little Bridge platform comprised over 1.2 million user records with their related transaction history (activity scores, login times and so on). These user records were created over a number of years and, as such, a large number became inactive over time. Some user records were never used, such as when a school set up accounts for a large number of students but only introduced the Little Bridge platform to a subset of them. Also, a significant number of home users (individual consumers rather than schools) had signed up for free trial accounts with Little Bridge and had never progressed any further.

As a result, a certain amount of data cleaning was necessary in order to identify the desired sample for the study. Primarily, it was impractical to export and analyse all 1.2 million user records. To reduce the data extracted from the platform to a manageable size, the filters shown in Table 2 were applied to the data to generate the data set ultimately used in the analysis.

Export filter criteria used to produce the data set from the Little Bridge platform database

| Filter | Value | Explanation |

|---|---|---|

| Created date | 2015-03-01 | Only users created after this date. This date was chosen as the product features were more stable after this date, providing a more consistent user experience. |

| No. of logins | > 0 | Only users who have logged in at least once. This indicates that a user has been active to any degree on the platform. |

| Customer type | School | Only include school users. These users would always have access to the social messaging feature and all learning activities. Home (consumer) users’ access to various features was dependent on the subscription purchased and could therefore affect their behaviour in Little Bridge. |

The data exported using these filters yielded 88,222 records (1 record per user). For each user, a number of attributes were also exported. These are shown in Table 3.

User attributes from Little Bridge data set

| Attribute | Type | Description |

|---|---|---|

| user_id | integer | Unique identifier for a user record |

| first_login | date | Date user first logged in |

| logins | integer | Number of logins in the period |

| act_unique | integer | Number of unique activities attempted in the period |

| act_50pc_unique | integer | Number of completed activities. A completed activity is one in which the user has achieved a score >= 50 per cent |

| avg_score-pc | number | Average score across all the user’s activities |

| post_acc_mod | integer | Message posts accepted by moderator |

| post_rej_all | integer | Message posts rejected |

| post_acc_sm | integer | Message posts accepted automatically |

The attributes helped describe the users and their usage patterns on the site. For aggregate values, the period over which the users’ data was included in the data set was set at nine months to allow a user the opportunity to engage with the Little Bridge program. This nine-month usage period began from the date that each user first logged in to Little Bridge. The total of number posts – messages written by the learner and uploaded to the social community – that each user created was also calculated, using the formula:

Analysis of quantitative data

In order to look at the difference in learning outcomes between users with no participation in the social community and those with a high participation, two groups of users were created based on the frequency with which each user posted to the Little Bridge platform. These groups were:

Group 1 (‘Low posters’): This group included users with zero total posts but at least one activity attempted. Requiring that the user had attempted one activity demonstrated that the user knew how to navigate to the learning activities in the user interface. Group 1 contained 35,372 users.

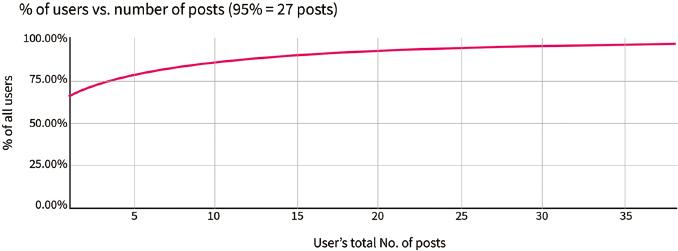

Group 2 (‘High posters’): This group included the top 5 per cent of users measured by the total number of posts a user made. The 95th percentile was calculated as 27 posts, so this includes users who posted greater than or equal to 27 messages (see Figure 5). Group 2 contained 4,633 users.

Each user was then assigned either to Group 1 (C1) or Group 2 (C2), or left unassigned if they met neither set of criteria:

| Total number of users exported: | 88,222 |

| Number of users in Group 1: | 35,372 |

| Number of users in Group 2: | 4,633 |

| Unassigned users: | 48,217 |

The unassigned user data were excluded for this study. Only data from those users assigned to Group 1 and Group 2 were used in the subsequent analyses. These data were analysed to answer the following two research questions:

Do children who participate in Little Bridge’s social community also complete more learning activities?

Do children who participate in the social community have a higher average score in learning activities?

To answer these questions, random, equivalent samples (N=4,600) of each group were selected for comparison. For each user, the number of learning activities completed and their average score on learning activities as a percentage were used to respond to questions 1 and 2, respectively. To complete a learning activity a child must get a score of 50 per cent or above in order to move to the next activity.

The average score was calculated as the average of all individual recorded scores for that user (see Table 4).

Mean and median averages for C1 and C2

| C1 (low post) | C2 (high post) | |

|---|---|---|

| Number of activities completed by group* | ||

| Total | 98,447 | 236,343 |

| Mean | 21 | 51 |

| Median | 11 | 45 |

| Average score by group (%)* | ||

| Mean | 80 | 86 |

| Median | 88 | 91 |

|

| ||

| *N=4,600 | ||

To verify the validity of the data, both mean and median average scores on pupil learning activities were calculated for each group. The objective in calculating the mean was to see the variation in assessment outcomes between the two groups. The median was then calculated for each group to validate the original analyses and assure that results were not skewed, for example, by any significant outliers in the data that could influence the mean in either direction. These analyses revealed that users in Group 2 completed more activities and had higher average scores on the learning activities, regardless of whether the mean or median average was used.

To further investigate any variation between the two groups, a two-sample, one-tailed t-test was performed to test whether the difference in averages between two (independent) groups is due to random chance or is instead significant. As none of the users in C1 appeared in C2, the groups were considered to be independent, making them suitable for this type of t-test. The t-test assumes that the data are normally distributed, with the mean equal to the median. In the Little Bridge data, both groups have a difference between their means and medians, indicating non-normal distributions of the data. However, when a sample size is sufficiently large (n>100) the t-test is robust, meaning it is not impacted by non-normal data (Lumley et al., 2002). For both tests, an alpha of 0.05 and hypothesized mean difference of 0 were set. The results of the t-tests are summarized in Table 5.

T-test results

| C1 (low post) | C2 (high post) | |

|---|---|---|

| Number of activities completed difference by group | ||

| Mean | 21.4015 | 51.3789 |

| Variance | 676.4904 | 1677.7849 |

| Standard deviation | 26.0094 | 40.9608 |

| Observations | 4600 | 4600 |

| Observed mean difference | –29.9774 | |

| P (T<=t) one-tail | 2.31 × 10–317 | |

| Average score difference by group (%) | ||

| Mean | 0.8225 | 0.8598 |

| Variance | 0.0407 | 0.0301 |

| Observations | 4600 | 4600 |

| Observed mean difference | –0.0373 | |

| P (T<=t) one-tail | 1.32 × 10–27 |

These t-test results indicate that the probability of the variation between groups being due to chance, for both the difference in average score and the difference in the number of activities completed, is practically zero (p = 0.00). This is much lower than the threshold set by Little Bridge (alpha = 0.05) and means that the difference observed is significant. In other words, the data show that the Little Bridge children who are the most active participants in the social network (Group 2) also complete more learning activities and achieve better results in the learning activities than those with the lowest social participation rates (Group 1). This confirms the hypothesis that there is a relationship between social behaviour and learning outcomes.

Discussion of findings and implications for Little Bridge

At the highest level, conducting this research had a direct impact on Little Bridge’s plans and direction in terms of product development and future research. It also gave focus to all members of the entire team, influencing how they message and market Little Bridge to potential customers and investors.

The research-backed approach has had a direct impact on Little Bridge’s future product development. The study confirmed the importance of real-world, authentic tasks such as connecting young language learners and encouraging their communication. The company is now introducing further support to the learner to aid them in this communication, including a ‘bank’ of learning content that will be provided to the user according to their immediate need, related to performance data, as indicated during the communication process. It is now focused on applying these additional support tools and incentives, using machine learning, to ensure that more users experience positive engagement in the community, more quickly. The company has introduced agile processes based on the EDUCATE mentor’s recommendation to test and refine these features.

The company is also exploring new opportunities in the direct-to-consumer market segment. A product in which instruction is problem-centred, combining real-life experience with supporting direct instruction – a notion often cited but generally not applied (Merrill, 2002) – is well positioned for delivery to individual families who are seeking to support their children’s learning beyond the classroom. Likewise, it enables the provision of meaningful instruction for children who are not receiving formal or effective English language learning in school. This often includes marginal groups, such as girls and rural dwellers. This new direction is fully aligned with the company’s mission to democratize access to quality learning and to place meaningful content firmly at the heart of education, through guided immersion and deliberate practice. It ensures a real purpose to the acquisition of English skills as increased numbers and more diverse students are able to join the global conversation at Little Bridge, and then beyond in the multitude of English-speaking contexts.

Implications for future research

The research methods used in this study entailed comparing two groups of users and were driven by the main research question. Little Bridge is now interested in conducting future research that examines all users in the data set, rather than limiting the study to the most and least frequent participants, to study the possible correlation between the level of a user’s social interaction and outcomes in the learning activities. It is hoped that this approach will make better use of the available data and provide an even more compelling result. Since Little Bridge has also observed that significant cohorts of children from countries where English is a first language have joined the community, it is also now looking to create a future research project to consider the impact of these users, if any, on overall language acquisition across its user base and the strength of the community for collaborative learning.

Studies of this nature would not have been considered possible by Little Bridge before participation in EDUCATE. This article also serves as a case study of sorts, describing how companies working within incubator-type settings can learn from the expertise provided by these programmes. In the case of Little Bridge, working within the EDUCATE programme has helped to embed a research mindset in the company’s approach to all of their work.